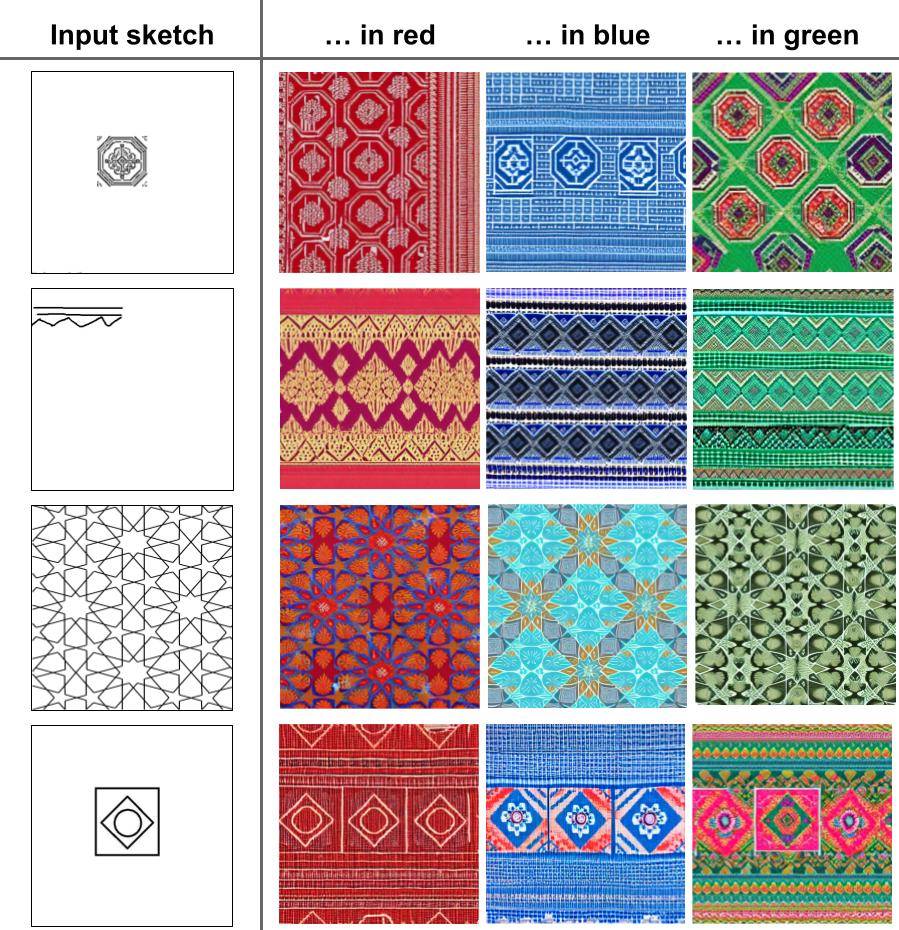

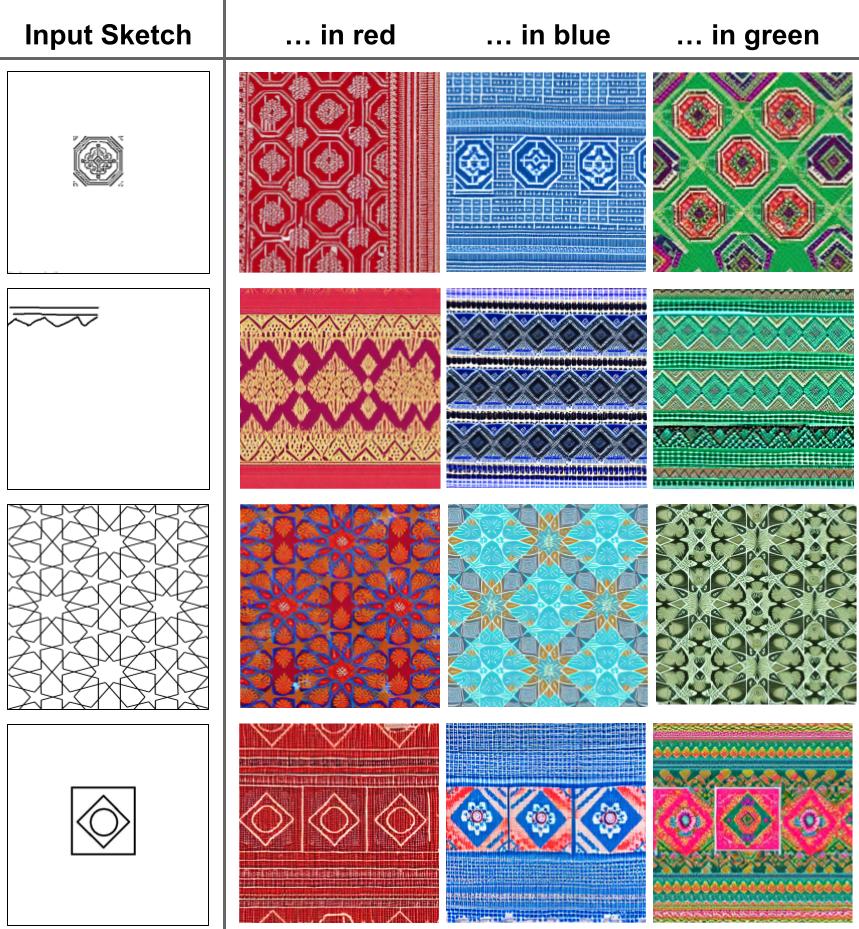

In this work, we propose a diffusion-based approach for generating

Thai textile designs from user-provided partial sketches. Our method

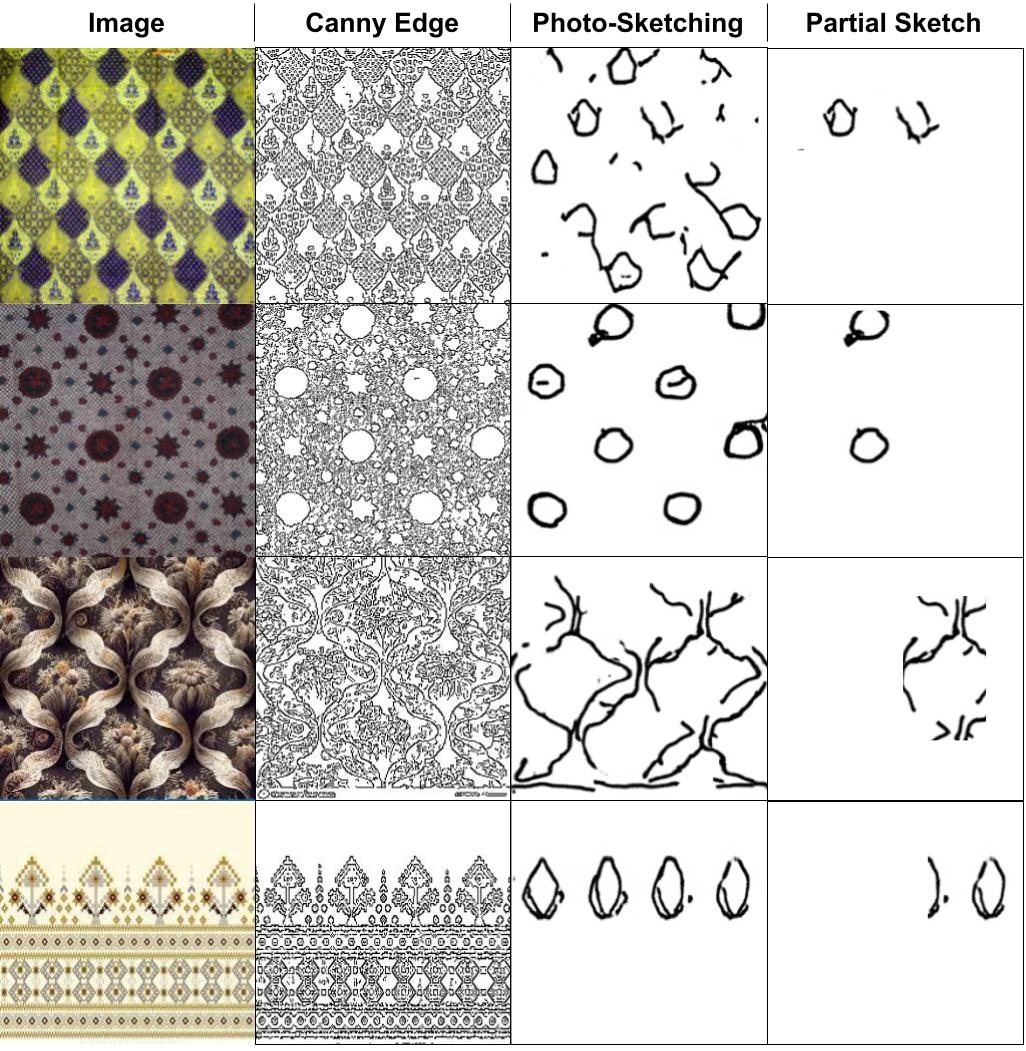

incorporates two key innovations: (1) training augmentation with

synthetically generated partial sketches that mimic human drawings,

and (2) text-based color control through user descriptions. To enhance

robustness and generalization, we employ a multi-stage training pipeline.

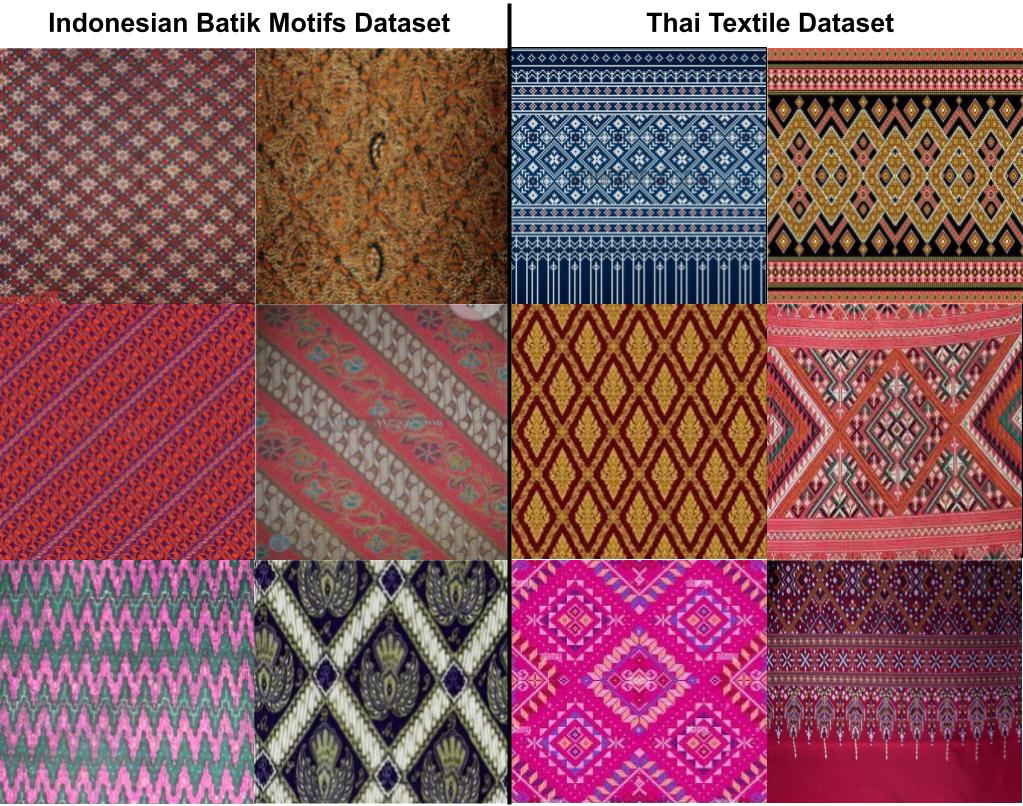

First, we leverage a pre-trained Stable Diffusion model. We then finetune the model on a larger dataset of Indonesian fabrics (which shares

stylistic similarities with Thai textiles) before specializing in a dataset of

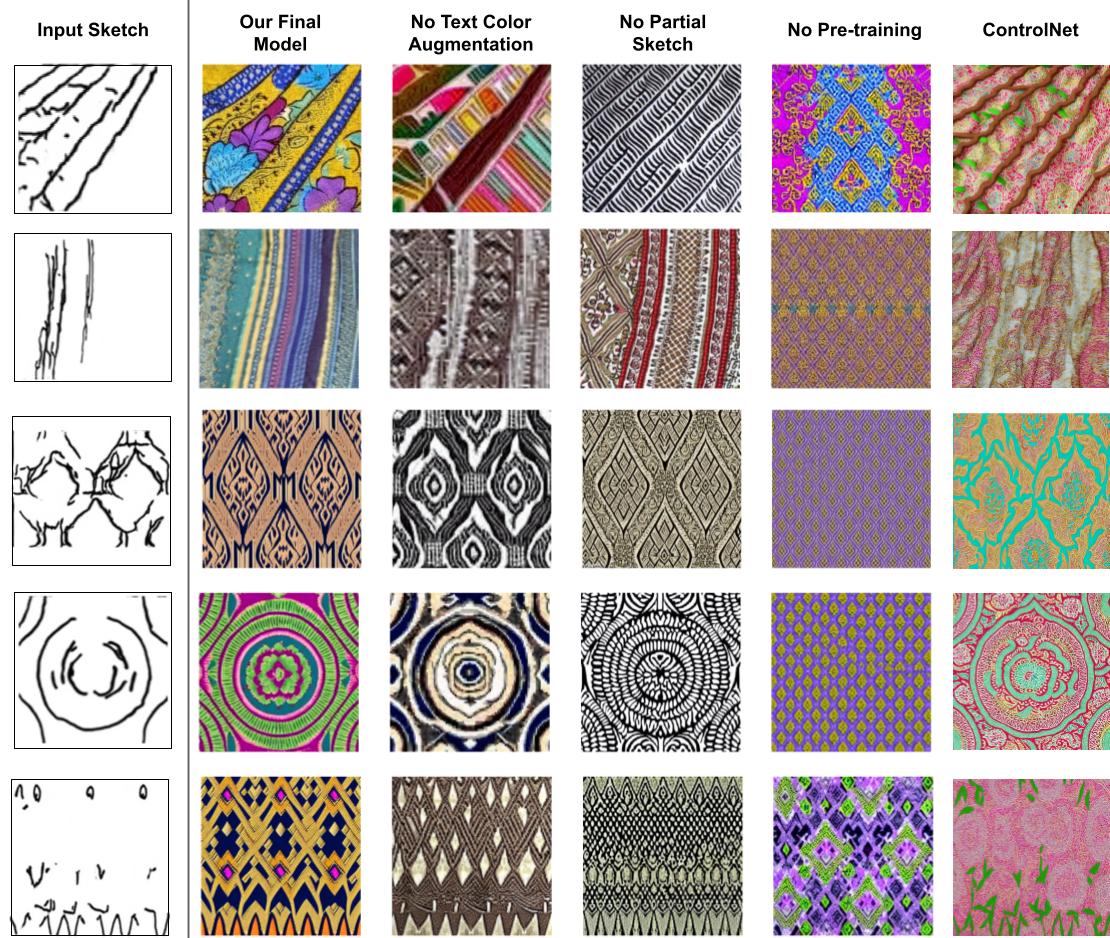

Thai textiles. Additionally, we implement curriculum learning, where

the model starts with complete sketches and gradually progresses to

more challenging partial sketches. Ablation studies demonstrate the

effectiveness of our approach, yielding robust and colorful designs that

adapt to various sketch styles and color instructions. This work opens

exciting avenues for future research in AI-assisted textile design, fostering

a seamless blend of human creativity and machine intelligence.

Keywords: diffusion, pattern generation, sketch-based Image synthesis,

image to image translation

Thai textile designs from user-provided partial sketches. Our method

incorporates two key innovations: (1) training augmentation with

synthetically generated partial sketches that mimic human drawings,

and (2) text-based color control through user descriptions. To enhance

robustness and generalization, we employ a multi-stage training pipeline.

First, we leverage a pre-trained Stable Diffusion model. We then finetune the model on a larger dataset of Indonesian fabrics (which shares

stylistic similarities with Thai textiles) before specializing in a dataset of

Thai textiles. Additionally, we implement curriculum learning, where

the model starts with complete sketches and gradually progresses to

more challenging partial sketches. Ablation studies demonstrate the

effectiveness of our approach, yielding robust and colorful designs that

adapt to various sketch styles and color instructions. This work opens

exciting avenues for future research in AI-assisted textile design, fostering

a seamless blend of human creativity and machine intelligence.

Keywords: diffusion, pattern generation, sketch-based Image synthesis,

image to image translation